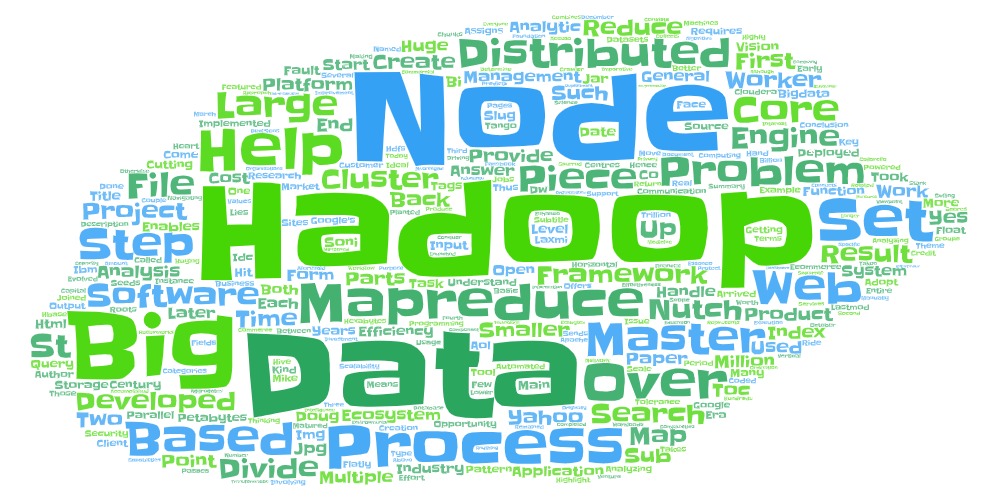

Getting Started with Hadoop

Big-data helps to come to conclusion based on the data points arrived from the Big-data analysis, for instance Big-data can be in the form of Petabytes or hexabytes.

“Hadoop” is a Big Data Management software of the 21st century which is powered by the distributed processing and data storage and the opportunity that such distributed processing offers in terms of cost effectiveness and efficiency.

However, it is imperative to understand what it means to create a Hadoop Ecosystem. Such an effort requires an entire ecosystem of support and a platform for implementation of that has to be developed and matured over a period of time. While from developers viewpoint, the face of Hadoop may be a set of jar files deployed over a set of machines providing services for navigating and working with distributed files thus enabling communication between client and Hadoop cluster for data analysis, the thinking, vision and systems that produce this end product are implemented over several years. The seeds of the Hadoop were first planted in 2002 when Doug cutting and Mike Cafarella took steps in creating a better open-source search engine. This project was as Nutch which can search over 700 million web sites and over a trillion web pages. In essence this is the first piece vision of the Hadoop Ecosystem – Search Engine.

The first piece is Core Engine which has its roots in Nutch project, which was later evolved based on the Google’s File System Paper in October 2003 and MapReduce paper in December 2004. These papers later helped in automating steps which were done manually in Nutch project hence in early 2006, when Doug Cutting joined Yahoo and set up a 300-node research cluster there, the storage and processing parts of the Nutch were automated based on the Google’s File System and MapReduce framework to form Hadoop as an open-source Apache Software Foundation project and the Nutch web crawler remained its own separate project.

MapReduce was developed by Google in 2004. It represents a programming framework for processing, generating and indexing large sets of data on the web. Google developed MapReduce as a general-purpose execution engine that handles the complexities of network communication, parallel programming and fault-tolerance for any kind of analytic application (hand-coded or analytics tool based).

MapReduce is a framework for processing highly distributable problems across huge datasets. It is an algorithm based framework for computing distributed problems using divide and conquer approach cluster of nodes. MapReduce jobs are divided into two parts. The “Map” function divides a query into multiple parts and processes data at the node level. The “Reduce” function aggregates the results of the “Map” function to determine the “answer” to the query. It consists of Master node which maps input into smaller sub problems/distributes work to clusters, where these worker nodes process smaller problems, return answers back to master node and the master node reduces set of answers back to master node.

It divides the basic problem into a set of smaller manageable tasks and assigns them to a large number of computers (nodes). An ideal MapReduce task is too large for any one node to process, but can be accomplished by multiple nodes efficiently.

MapReduce is named for the two steps at the heart of the framework.

Map step – The master node takes the input, divides it into smaller sub-problems, and distributes them to worker nodes. Each worker node processes its smaller problem, and passes the result back to its master node. There can be multiple levels of workers.

Reduce step – The master node collects the results from all of the sub-problems, combines the results into groups based on the key and then assigns them to worker nodes called reducers. Each reducer processes those values and sends the result back to the master node.

MapReduce can be a huge help in analyzing and processing large chunks of data: buying pattern analysis, customer usage and interest patterns in e-commerce, processing the large amount of data generated in the fields of science and medicine, and processing and analyzing security data, credit scores and other large data-sets in the financial industry.

Although in 2006-era, the Hadoop was not able to handle production search workloads at web scale and only worked on 5 to 20 nodes at that point. Horizontal scalability was an issue because Hadoop was originally required to handle petabytes and exabytes of data distributed over multiple nodes in parallel.

It took couple of years for Yahoo to move its web index into Hadoop. This transformation to Hadoop was completed by end of 2008. In 2008 Yahoo has created “Production search index” which was based on 10,000 core Hadoop clusters. Hadoop is used both in Production and research environments and many organisations are using Hadoop. Few examples are Facebook, AOL, Yahoo, IBM.

IDC predicts that Hadoop software market will be worth 813 million in 2016. Hadoop has created many start-ups and spurred hundreds of millions in venture capital investment since 2008. Hadoop software is driving the big data market today and it will hit more than 23 billion by 2016.

The second piece of Hadoop is the creation of Big-Data Hadoop platforms that would enhance efficiency and can help in analysing large datasets and provide meaningful information to help organizations in making business decisions. Two core platforms of the Big Data Analytics involving Hadoop as core component are Cloudera and Hottonworks both providing Hadoop Ecosystem capabilities which enables analysis of more data at lower cost, Horizontal scalability, fault tolerance, improvement in programmability and data access, Co-ordination, workflow, management and deployment. Everyone selling any type of database, business intelligence software or anything else related to data at least connects to Hadoop in some capacity.

Cloudera was the first commercial Hadoop company launched in march 2008, which helped enterprises adopt Hadoop which otherwise would have taken longer time for them to adopt.

The third piece of Hadoop is the industry specific vertical applications that can ride on the aforesaid Core engine and platforms. This is where the opportunity lies for example in eCommerce “Analysing customer behaviour in real time”. There is huge scope for Big Data Hadoop applications in healthcare, education, eCommerce industries. The applications in BI, DW and analytics can be developed on a technology stack involving Hadoop HDFS and MapReduce (with HBase & Hive).

The fourth piece of Hadoop is the creation and administration of data centres which can help in Big Data processing and over which all the three pieces mentioned above can be deployed. Such data centres should protect the privacy of data and provide adequate security measures.